Cognitive Continuity: The Missing Architectural Property of AI-Native Infrastructure for Physical AI

10 Feb, 2026

From Convergence to Continuity in the Design of Intelligent Systems

Written by Dr Mallik Tatipamula, FRS FREng FRSE, CTO, Ericsson Silicon Valley

Executive Summary

The evolution from connected things to intelligent environments fundamentally changes what infrastructure must guarantee. In the Internet era, correctness meant delivering data reliably. In the era of Physical AI, where autonomous agents sense, decide, and act across the real world, correctness must expand to preserving meaning, intent, trust, and accountability across distributed intelligence.

This article introduces cognitive continuity as the missing architectural property of AI-native infrastructure: the ability of systems to sustain coherence as cognition flows across agents, layers, institutions, and time. It argues that three structural forces are shaping the future of infrastructure: distributed convergence, distributed cognition, and cognitive continuity,and that future advances across control, compute, and connectivity are all converging toward this single architectural requirement.

The consequence is profound: success will no longer be measured by bandwidth, latency, or scale alone, but by how well infrastructure preserves alignment and governability across autonomous systems.

Introduction

Part I of this series, (https://tinyurl.com/2d6tjr5m), argued that the next generation of networks will not merely make connectivity faster, but will transform connected environments into intelligent ones. The shift from the Internet of Things to Physical AI represents a structural change: sensors become agents, endpoints become participants, and networks evolve from transport infrastructure into coordination infrastructure.

If that trajectory holds, a deeper architectural question follows:

Once environments begin to reason and act autonomously, what must the infrastructure itself be responsible for preserving?

The answer is no longer just performance. It is coherence: the sustained alignment of meaning, intent, trust, and behavior across distributed intelligence. I refer to this property as cognitive continuity.

Cognitive continuity reframes infrastructure from a passive substrate that moves data into an active architectural fabric that sustains collective intelligence safely, predictably, and governably at scale.

The Three Forces Shaping the Future

The evolution of infrastructure can be understood as unfolding through three structural stages:

- Architectural convergence, driven by performance demands

- Distributed cognition, driven by the need for collective reasoning

- Cognitive continuity, driven by the requirements of safety, trust, and governance

These are not abstract ideas. They reflect pressures already emerging in the design of autonomous factories, intelligent transportation systems, adaptive infrastructure, and large-scale cyber-physical environments.

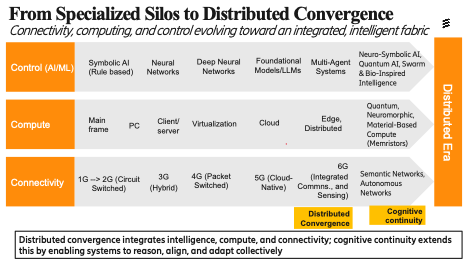

Figure 1 illustrates this progression across control, compute, and connectivity, showing how each domain evolved independently in the past, is converging today, and is now advancing toward a future shaped by the need to preserve coherence as intelligence becomes distributed.

Stage 1: Why Technologies Are Converging

As shown in Figure 1, across control, compute, and connectivity, the same structural shift is underway: movement from centralized, monolithic architectures toward distributed, composable ones.

- Intelligence is spreading across agents rather than residing in a single centralized model

- Compute is moving beyond data centers into cloud, edge, and device environments

- Networks are evolving from static transport toward programmable, adaptive coordination layers

At the same time, the systems now being built like autonomous vehicles, intelligent factories, adaptive infrastructure, large-scale cyber-physical environments, must sense, decide, and act in real time. Their requirements are fundamentally different from traditional digital systems. AI must remain grounded in real-world context; compute must respond to urgency and locality; networks must distinguish between routine data and safety-critical intent.

The result is not parallel evolution, but structural fusion. Control, compute, and connectivity are no longer independent stacks; they are converging into a single interdependent architectural fabric. This is not a design preference, it is a functional necessity created by the performance requirements of Physical AI systems.

I refer to this as distributed convergence: distribution within each layer and convergence across layers into one coherent architectural fabric.

Yet convergence alone is insufficient. As intelligence spreads throughout infrastructure, a new challenge emerges: how to sustain coherent collective reasoning across distributed actors.

Stage 2: Why Intelligence Must Become Distributed

Physical AI does not emerge from a single centralized intelligence. It arises from collections of agents embedded across vehicles, robots, devices, infrastructure, and cloud services, each operating with partial context, local responsibility, and real-time constraints.

Tasks must be decomposed. Context must be shared. Decisions must be coordinated. Intent must be negotiated across boundaries.

This is the shift toward distributed cognition: the emergence of collective intelligence across many autonomous agents rather than a single centralized mind.

Distributed cognition is not simply a scaling strategy. It is the natural architecture of intelligent physical systems. A factory cannot rely on a single global controller. A city cannot depend on one brain. A transportation system cannot function through a single planner. Intelligence must live throughout the environment, cooperating across layers and locations.

But once cognition becomes distributed, a deeper architectural challenge emerges: coherence becomes fragile.

Stage 3: Why Cognitive Continuity Becomes Essential

When intelligence becomes distributed, coherence becomes the limiting factor.

A misinterpreted intent can propagate across dozens of agents. A semantic mismatch between layers can cascade into unsafe behavior. A policy interpreted differently at the edge and in the cloud can produce outcomes no one designed. Trust assumptions can silently break across organizational boundaries. Accountability becomes difficult to trace.

These are not performance failures. They are coherence failures.

In classical networking, correctness was syntactic: deliver the packet intact and the system succeeds. In AI-native systems, correctness becomes semantic. It is no longer enough that information arrives. It must arrive with the right meaning, the right context, the right obligations, and the right interpretation of responsibility.

This is where cognitive continuity becomes the architectural requirement.

Cognitive continuity is the ability of infrastructure to preserve coherence of meaning, intent, trust, and behavior as intelligence flows across distributed systems over time.

Without continuity, convergence produces power without stability. Distributed cognition produces intelligence without reliability. With continuity, autonomy becomes governable.

A Concrete Illustration

Imagine a future emergency-response ecosystem where autonomous drones, hospitals, traffic systems, and city command platforms operate as independent agents.

Each system is locally optimized. Each uses sophisticated AI. Each acts with good intent.

Yet without cognitive continuity:

- Drones optimize fastest routing

- Hospitals optimize bed utilization

- Traffic systems optimize throughput

- Command centers optimize policy compliance

Together, these local optimizations can produce globally unsafe outcomes: delayed trauma care, misallocated resources, conflicting priorities, and untraceable accountability.

What fails in such a system is not intelligence, but coherence. The infrastructure lacks a shared substrate for preserving meaning, intent, trust, and responsibility across agents.

How Architecture Evolves to Support Continuity

The most important insight from Figure 1 is that advances across control, compute, and connectivity are converging toward a common architectural purpose: preserving coherence as intelligence becomes distributed. The emerging technologies below are not independent innovations; they are architectural responses to the same systemic requirement.

Control: Making Meaning Explicit and Negotiable

As cognition becomes distributed, coordination fails when meaning remains implicit. Many emerging approaches in AI control architectures can be understood as mechanisms for stabilizing meaning across agents rather than merely improving intelligence.

Key trajectories include:

- Neuro-symbolic and structured reasoning systems that represent goals, constraints, obligations, and policies explicitly rather than only statistically. This enables agents to exchange intent in forms that are interpretable and verifiable across domains

- Multi-agent coordination frameworks where agents negotiate objectives, resolve conflicts, and converge on shared plans rather than operating as isolated optimizers. These architectures reduce semantic drift by creating shared representations of commitments and responsibilities

- Collective intelligence and swarm-inspired models that produce globally coherent behavior without centralized control. Their value is not performance but stability: the ability to preserve alignment under uncertainty, adaptation, and partial failure

- Governance-aware AI architectures where accountability, escalation, and override mechanisms are embedded within control logic rather than imposed externally. These models treat responsibility as a first-class architectural concern.

These directions are not about making AI more capable. They are about making meaning persistent, interoperable, and governable across distributed cognition. Emerging work on machine-interpretable norms, ethical constraints, and value-aligned representations further reflects the need for continuity not only of meaning, but of acceptable behavior across socio-technical systems.

Compute: Preserving Context, State, and Grounding Over Time

A central failure mode in Physical AI systems is loss of contextual continuity. When cognition becomes detached from sensing, embodiment, and temporal context, systems remain logically correct yet causally disconnected from reality.

Emerging compute architectures increasingly respond to this requirement:

- Edge-grounded and embedded intelligence keeps cognition proximal to perception and actuation, preserving causal coupling between observation, decision, and action

- In-sensor and near-sensor computing reduce the semantic gap between what is observed and what is inferred, preventing drift introduced by abstraction layers and batching pipelines

- Stateful substrates and memory-centric architectures (including neuromorphic approaches) emphasize persistence of internal state over time, enabling continuity of reasoning rather than isolated inference events

- Digital twins and persistent world models allow agents to maintain continuity of situational awareness across time, actors, and domains, rather than reconstructing context episodically

- Material and embodied computation (where sensing, memory, and processing are co-located in physical substrates) matter not for novelty but because they preserve grounding: intelligence remains continuously coupled to the physical reality it must govern

These directions are not optimizations for throughput. They are structural responses to the need for continuity of state, context, and causality in autonomous systems. Increasingly, this also includes continual and lifelong learning architecturesdesigned to preserve identity, alignment, and coherence of behavior over time rather than resetting semantics through episodic retraining.

Connectivity: From Packet Transport to Meaning Preservation

Today’s networks move packets with extraordinary precision, yet they discard meaning, intent, and trust semantics at every hop. As cognition becomes distributed across agents, institutions, and infrastructures, this syntactic-only model becomes a systemic liability.

Future connectivity architectures evolve toward supporting continuity directly:

- Semantic and intent-aware networking allows infrastructure to distinguish safety-critical actions from best-effort traffic and to treat them differently based on meaning rather than address alone

- Policy-aware orchestration across domains enables constraints, obligations, and permissions to travel with tasks and data rather than being reinterpreted locally at each boundary

- Native identity, provenance, and accountability primitives support continuity of authority and responsibility as decisions propagate across organizations and systems.

- Agent-to-agent protocols that carry goals, constraints, confidence, and commitments, not just payloads, become essential for coordination across heterogeneous intelligent actors

- Integrated sensing and communication architectures matter because distributed agents can only remain aligned if they share consistent grounding in the physical world

- Autonomous adaptation mechanisms become necessary because static configuration cannot preserve coherence under dynamic, evolving multi-agent behavior.

These shifts are not feature additions. They are architectural responses to the requirement that infrastructure itself must participate in preserving meaning, trust, and continuity across distributed intelligence. Critically, this includes support for temporal continuity: preserving coherence across model versions, policy updates, and evolving agent behaviors, as well as continuity of identity as agents migrate, delegate, and operate across domains.

Taken together, these trajectories describe systems designed not merely to support intelligence, but to preserve coherence, accountability, and intent as intelligence becomes distributed.

They explain why so many seemingly diverse research directions: neuro-symbolic AI, multi-agent systems, neuromorphic computing, digital twins, semantic networking, embodied intelligence, are in fact converging on the same architectural destination: infrastructure capable of sustaining cognitive continuity.

Why This Matters Beyond Technology

This shift is not only technical. It is societal.

As autonomous systems increasingly shape transportation, healthcare, energy, manufacturing, and governance, society will demand not only efficiency but predictability, safety, and accountability. These properties cannot be bolted on afterward through regulation alone. They must be supported architecturally.

Over time, cognitive continuity will become encoded into:

- Standards and protocols

- Certification regimes

- Regulatory frameworks

- Institutional governance models

Just as reliability, interoperability, and security became infrastructural expectations of the Internet, coherence will become an infrastructural obligation for AI-native systems.

The defining question will no longer be:

“How much bandwidth does this provide?”

But instead:

“How well does this preserve alignment across distributed intelligence?”

Conclusion

What ultimately emerges from this progression is a coherent architectural story.

- Architectural convergence is driven by performance: systems must sense, decide, and act in real time, forcing control, compute, and connectivity to fuse into a single fabric.

- Distributed cognition is driven by collective intelligence: once systems become autonomous, intelligence itself must be distributed across agents that coordinate, negotiate, and reason together.

- Cognitive continuity is driven by safety, trust, and governance: as intelligence scales across environments and institutions, coherence of meaning, intent, and behavior must be preserved.

- Visibility demanded connectivity. Intelligence demanded convergence and distributed cognition. Coherence now demands continuity.

- Without continuity, convergence produces power without stability and intelligence without governability. With continuity, distributed intelligence becomes trustworthy, scalable, and societally deployable.

- Part I argued that we are moving from connected things to intelligent environments. Part II argues that intelligence alone is not enough. What must come next is coherence: cognitive continuity.

That, ultimately, will define the success of AI-native systems in the decade ahead. Research hubs such as CHEDDAR play a vital role in advancing this thinking, bringing together research, industry, and systems insight to shape how future networks preserve coherence, not just performance.